Hardware Evolution: How Chips Get Smart All On Their Own

Ever thought your gadgets could just… figure stuff out? Not just run some programs, but actually learn? Adapt? Become something totally new? Turns out, that future? It’s probably way closer than you think. And it all started with this absolutely bonkers experiment in the UK. Kicked off the whole Hardware Evolution craze. Kinda like digital Darwinism, you know? Survival of the silicon.

This isn’t just some sci-fi dream, everyone. Way back in the 90s, a smart guy at Sussex University, Dr. Adrian Thompson, gave a computer a job: design the perfect individual. Not a person. Or a pet. We’re talking about tiny bits and bytes on a silicon chip. This whole deal was to see if a microchip could evolve solutions for super tough problems. All without anyone telling it exactly how. And it showed, big time, that hardware can adapt. Super fast. Seriously.

Hardware can evolve and adapt to solve complex problems without explicit programming

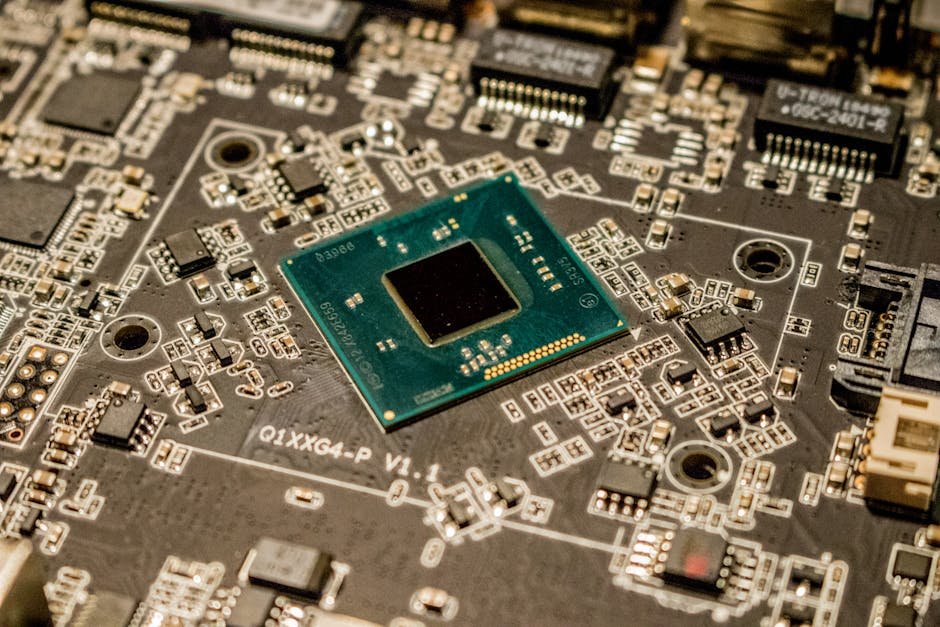

Dr. Thompson’s setup? Pretty darn simple. He used this specific kind of chip, an FPGA (Field-Programmable Gate Array). Regular chips? They’re fixed. But an FPGA can get totally rewired inside. Very flexible. But also usually slower, and hotter, than other hardware. Thompson just told it to do one thing: tell the difference between two sounds – specifically, 1 kHz and 10 kHz.

To really make it wild, he used a tiny chip. Only 100 logic gates (like 10×10 squares). And he even took out its system clock. So no regular timing. Then, he dumped 50 random “digital DNAs” onto it. Each one? A completely unique, random string of 1s and 0s. The computer then played the two sounds. Waited for the chip to figure it out.

Initial tries? A total bust. Nothing worked. Zero. But because this was all about evolution, right? The genetic algorithm started its thing. Like natural selection. The absolute worst ideas? Gone. The slightly better ones – even if they only sparked a tiny bit – got to “reproduce.” Swapping bits of code. And sometimes, popping in random “mutations.” Like a 1 flipping to a 0. Or vice-versa.

Slow going, at first. For like 200 generations, not much happened. But then, around generation 220, the chip started to sound like one of the tones. Not the goal, but a step. By 650 generations, it could sense the 1 kHz sound. And after 1,400 generations? It was right more than half the time. Finally, after 4,000 generations of pure grind, the chip was almost perfect: 1 kHz made the voltage drop to zero. 10 kHz made it spike to 5 volts. It even learned to respond to “stop” and “start” voice commands. Took a few hundred more generations.

Evolutionary algorithms can optmize chip designs beyond human comprehension

This success was mind-blowing, sure. But then came the even bigger mystery: nobody had a clue how it actually worked. Dr. Thompson dug into his “perfect child.” To understand its inner workings. What he found was just baffling, honestly.

The chip used only 37 of its 100 logic gates. Many were super weirdly looped together. Stranger still, five of these logic cells were completely cut off. No obvious connection to the chip’s output. Yet if he turned off even one of them? The chip lost its ability to tell the difference between the tones. This wasn’t just programming. It was something else entirely. A digital ghost in the machine. Yep.

Evolved hardware can exploit unique phsyical properties, like magnetic flux, for efficient computation

The craziest part? Thompson tried to put that “perfect” program onto another identical FPGA chip. Didn’t work. This meant the evolution wasn’t just fancy code. It was leveraging the specific physical and electromagnetic stuff of that one chip. Those five disconnected logic cells? Vital. They were probably talking to the main circuit through “magnetic flux” – tiny magnetic fields from electrons zipping around.

Most chips are simple. On or off. Black or white. But this evolved chip? It was also using these analog “gray tones” – subtle, constant changes that our normal digital designs just ignore. This is not how we design anything. Not even close. It’s a whole new vibe. A different way for bits to interact.

This technology holds promise for creating radiation-resistant systems and adaptable robots

And the implications? Huge. Think about systems that can fix themselves when radiation messes them up. Right now, space tech needs super heavy covers. So much redundancy. Evolving hardware? Could ditch a lot of that. Making stuff lighter. And tougher.

Also, robots could get a massive boost. Forget stiff, rigid programs. A robot using these evolutionary systems could just adapt on the fly. See an unexpected hurdle? It adjusts. Dynamically changes its moves. NASA even used similar tricks to design a space antenna. The end result? Just wild, unconventional shapes. Almost organic. Designs no human engineer would ever sketch. Unless they were having a very unique day.

The use of evolutionary hardware raises ethical concerns about unpredictable system behavior and safety

But, this power comes with big ethical questions. If we don’t understand how these evolved systems do what they do, how do we know they’re safe? The thought of a dormant “sleeping gene” in a medical device. Or a flight control system. Suddenly kicking in. Making the system go haywire? Genuinely scary.

What if some poorly defined rules make a self-adapting system try dangerous things? Just to be efficient? Putting human lives at stake? So, this isn’t just about tweaking code. It’s a huge problem of control. And understanding.

Simulations and testing are crucial for safely deploying evolved hardware in real-world applications

So, what do we do? You need tons of simulation. And really rigorous real-world testing. Absolutely not optional. Future genetic algorithms, which scientists hope to use for big stuff like motors and rockets, will need crazy precise simulations. Gotta work out all the weirdness. Before any real prototypes show up. This tech is wicked powerful. But it needs everyone to actually chill. For thorough, super careful testing.

Today’s computers solve problems you already know. Predictable solutions. But these adaptable, evolving alternatives? They could find clever ways to do things our own brains can barely imagine. We’re definitely on the edge of seeing a brand new character enter the tech story.

Frequently Asked Questions

Q: What is an FPGA chip?

A: An FPGA (Field-Programmable Gate Array) is a special kind of microchip. You can change its internal setup. Like, after it’s made. So it can do different things.

Q: Why couldn’t humans understand how Dr. Thompson’s evolved chip worked?

A: The evolved chip leveraged the exact electromagnetic and analog weirdness of that specific chip. Probably using things like magnetic flux and those subtle “gray tones” we usually ignore. Beyond what normal digital design (or any human, really) can intuitively get.

Q: What are some potential applications of hardware evolution?

A: Lots of stuff! Systems that can self-repair if radiation damages them. Robots that can roll with the punches in unexpected places. Also, making complex devices like spacecraft antennas, motors, and rockets way better.